"The lowest‑cost place to put AI will be in space, and that will be true within two years, maybe three at the latest" recently declared Elon Musk, who, having promised to solve driving, transit, and energy several times each, any day now he assures us, as he has for some years, and who by remarkable coincidence happens to own a rocket company about to IPO, hereby proclaims with the quiet authority of a man who has never once been wrong about a timeline, that the best place to run quasi-sentient spreadsheets will be, in mere point of fact, the infinite vacuum of outer space.

Elon launched a car into space for no reason, and true, that was fun. So why not 80 billion in hardware on a 3-year depreciation curve? I'll tell you why not. Picture a skyscraper. Now remove the ground, the air, the firefighters, the doors that open, and the electricians who hate their lives but still show up at 3 a.m. because divorce is expensive and copper wire doesn't judge. What you're left with is an orbital data center. A very expensive brick falling forever in a place where the repair guy charges $400 million per house call and the warranty explicitly covers nothing because you put it in space, you absolute psychopath.

On Earth, you buy an 8‑GPU Nvidia H200 box and it already feels like grand theft capitalism. Hundreds of thousands of dollars for a humming metal rectangle that turns electricity into slightly better autocomplete. You stack ten thousand of these, and you’ve got a few billion dollars of hardware quietly roasting a concrete slab somewhere in Nevada, humming like a beehive of gradients and loss functions.

In orbit, that same ten thousand boxes are contraband. Every kilogram is a confession. You buy servers and their escape velocity, shoving ninety thousand kilograms of anxious silicon through the sky at Mach WTF. You thought GPUs were expensive? Wait until you meet electricity in space.

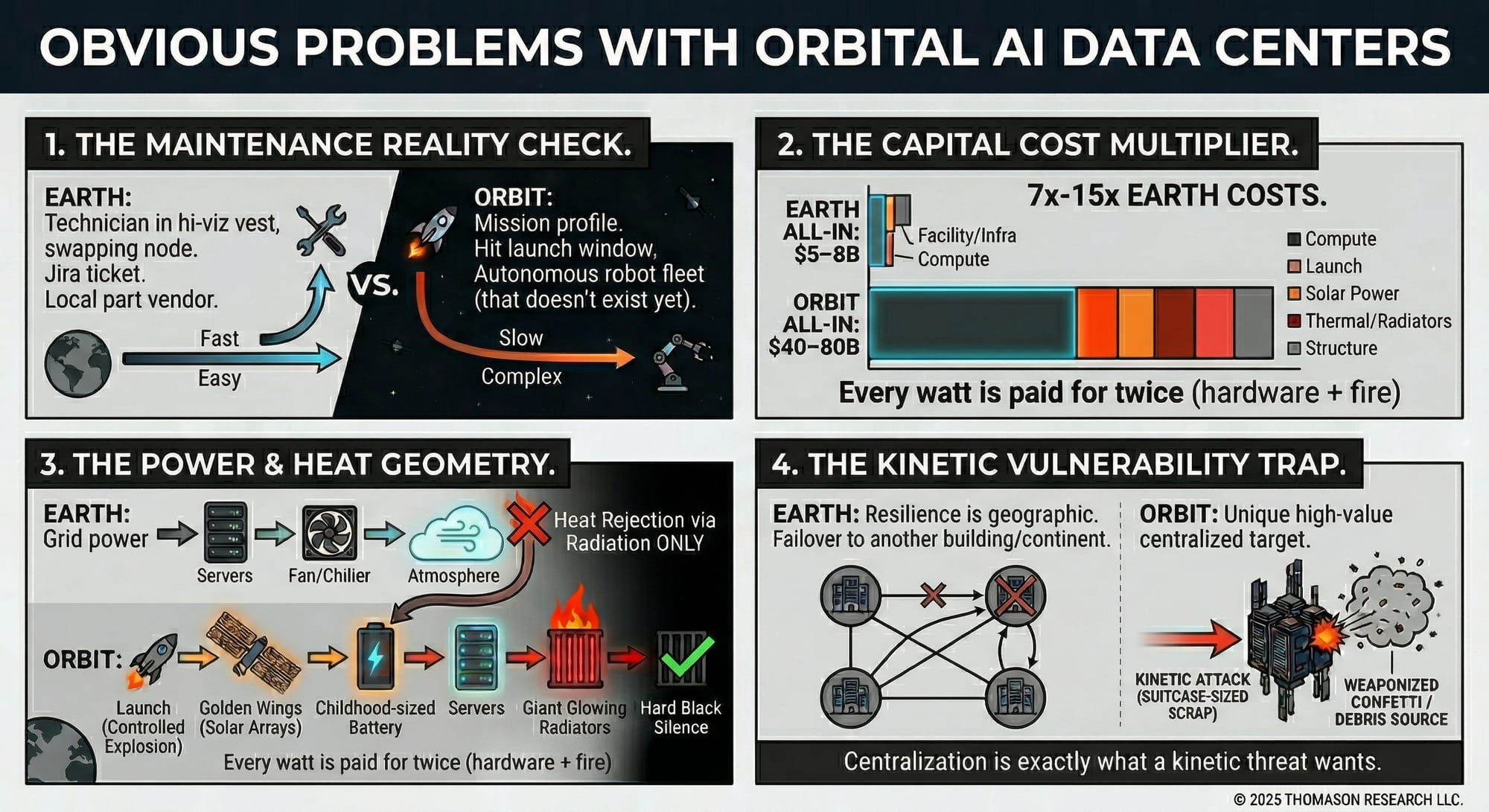

On Earth, power comes out of the wall. It’s boring. It smells like regulations and utility commissions and bearded guys named Ron with clipboards. In orbit, power has to arrive on golden wings. Gigantic solar arrays unfolding like origami made of PhD theses and procurement meetings. You need enough of them to feed eighty megawatts of artificial genius and every one of those panels got there riding a controlled explosion. Every watt is paid for twice, once in hardware, and once in fire.

Then there’s the night.

On Earth, when the sun goes down, you yawn and keep training. In space, every orbit drags you through a blackout. So you build battery packs the size of people’s childhoods. You hoard energy like a prepper with a basement full of canned beans, except your beans explode in vacuum if you get the math wrong. All of it just to make sure your chatbot doesn’t forget the word “however” at perigee.

Cooling? On Earth, you move air and water. Fans scream, chillers grind, somebody from facilities mutters about delta‑T and goes outside for another smoke. The heat just…leaves. It wanders off into the atmosphere and becomes someone else’s climate problem.

In orbit, there is nowhere for heat to go. No air, no breeze, just hard black silence, like dinner with your wife after you stuck the kid's college fund into bitcoin. So you bolt giant radiators to your floating supercomputer, metal wings whose only job is to glow with all the wasted ambition you pumped into those GPUs. You pay a 5x-10x capex premium to be slightly warmer than the rest of the universe.

And all those mundane, stupid miracles of Earth data centers? The stuff you never put in the slide deck?

Down here under Elysium, a tech in a hi‑viz vest can walk into your hot aisle, swear at a blinking amber LED, and swap a bad node for a good one before lunch. You can roll a scissor lift into the room, drop a busway, run another fiber trunk. Parts come in cardboard. Dead gear leaves on a pallet.

Up there, every loose screw is shrapnel forever. Every failed power supply is a small metal coffin because nobody’s going out during lunch break to reseat a cable. Maintenance is no longer a Jira ticket, now it's a mission profile. You don’t send a guy, you send a spacecraft, or maybe a fleet of autonomous robots that don't exist yet. You don't file an RMA, you hit a launch window.

So you build a cousin of the International Space Station that hates people and loves inference. Trusses, docking ports, gyros, thrusters, comms, robotics, shields. An entire cathedral of engineering so your language model can argue with someone about movie rankings from low Earth orbit.

And after all this lunacy, after the rockets and radiators and orbital batteries, what do you actually get?

You get something strictly worse than a concrete building in Nevada.

Because down here, we already know how to pour a slab, run a substation, and drop a hundred megawatts into rows of very nervous metal. We know how to cool it, insure it, secure it, and complain about the utility bill. On Earth, your constraint is zoning and patience. In space, your constraint is physics and hubris.

Even if you make launch ten times cheaper, a hundred times cheaper, it doesn’t fix the geometry. You still have to bring everything with you: the power plant, the coolant, the structure, the spares, the tools. In orbit, there is no “local contractor.” There is no “call the vendor.” There is only mass and failure modes and that quiet feeling in your stomach when you realize every mistake has a multi‑million‑dollar burn rate.

An orbital data center is what happens when you take a problem that money already solves on Earth and ask, “What if we added rockets, vacuum, and ten extra nines of complexity?” It's the Clippy the Paperclip of infrastructure. It’s an ISS made of GPUs and wishful thinking, a monument to the idea that if something is hard and shiny enough, it must be smart.

But it’s not smart.

It’s a very glamorous way to do a very boring thing.

If you want to waste billions to impress the future, build a telescope.

If you want to train models, build a box on dirt with ugly transformers humming out back.

Space is for the things we truly cannot do down here. Data centers are not one of them.

But sure, let's think about this.

After all, we're long the grifts thesis, aren't we?

1. Server fleet mass and cost

Assume a 10,000-node cluster of 8-GPU H200 Lenovo-class servers:

- Approximate server mass (fully configured, 8× H200, CPUs, memory, cooling): ≈90 kg per node.

Total mass of servers:

$$10{,}000 \times 90 \text{ kg} = 900{,}000 \text{ kg}$$(900 metric tons).

- Approximate per-server cost (8× H200, plus chassis, CPUs, NICs, etc.): $400,000–$600,000.

Total hardware cost:

$$\text{Low: } 10{,}000 \times 400{,}000 = 4{,}000{,}000{,}000 \quad (\approx \$4\text{B})$$ $$\text{High: } 10{,}000 \times 600{,}000 = 6{,}000{,}000{,}000 \quad (\approx \$6\text{B})$$So just the compute boxes are a $4–6B line item before you even talk about space.

2. Launch mass and Falcon Heavy flights

Use Falcon Heavy as the workhorse:

- Payload to low Earth orbit (expendable or near-expendable configuration): ≈63,800 kg.

- Server payload mass: 900,000 kg.

Server launches required:

$$\frac{900{,}000 \text{ kg}}{63{,}800 \text{ kg/launch}} \approx 14.1$$Call it 15 Falcon Heavy launches just for the servers.

Representative per-launch price:

- Conservatively $100–150M per mission (depending on configuration and customer).

So server launch cost is on the order of:

$$\text{Low: } 15 \times \$100\text{M} = \$1.5\text{B}$$ $$\text{High: } 15 \times \$150\text{M} = \$2.25\text{B}$$That's your "just getting the racks into orbit" bill: $1.5–2.3B.

3. Power budget — 80 MW of thirsty silicon

Take a realistic planning envelope per 8-GPU H200 server:

- GPUs: 8 × 700 W = 5.6 kW.

- Rest of system (CPUs, memory, NICs, SSDs, NVSwitch, fans/pumps, overhead): ≈2–4 kW.

- Design power per node: 8 kW (with 10 kW as a worst-case cap).

Total IT power:

$$10{,}000 \times 8\text{ kW} = 80\text{ MW}$$At 6 kW/node (throttled/mixed): 60 MW. Take 80 MW as the working number.

On Earth, this is just a big substation problem. In orbit, it becomes:

- Solar generation sized for >80 MW plus margin.

- Energy storage for eclipse periods.

- Power conditioning, distribution, and redundancy.

4. Space solar arrays and energy storage

4.1 Solar array sizing

You need enough generation to cover 80 MW continuous IT plus overheads. Even assuming 100 MW nameplate covers 80 MW average after orbital night and inefficiencies, you're looking at a ~100 MW class system.

Ballpark cost at $80,000–100,000 per kW all-in (hardware, integration, launch amortized):

$$\text{Low: } 80{,}000 \times \$80{,}000 = \$6.4\text{B}$$ $$\text{High: } 80{,}000 \times \$100{,}000 = \$8.0\text{B}$$Your orbital power plant is itself a $6–8B subsystem.

4.2 Batteries for orbital night

In LEO, each orbit has a night segment (~35–45 minutes). To keep 80 MW running during eclipse:

$$80 \text{ MW} \times \frac{2}{3} \text{ h} \approx 53 \text{ MWh}$$Add margin: 70–100 MWh of battery. At $2,000–5,000 per kWh all-in:

- 70 MWh (70,000 kWh) at $2,000/kWh → $140M.

- 100 MWh at $5,000/kWh → $500M.

Call it a few hundred million to around a billion dollars for batteries and power electronics.

5. Heat rejection radiators — the size of your mistakes

Heat in a vacuum leaves via radiation only. For ~100 MW total waste heat:

- ISS uses hundreds of square meters of radiators to dump ~100 kW. Scale naively and you need ~1,000× the area for ~100 MW.

- Mass follows area. Cost follows mass.

- Additional commitments: pumps, coolant loops, redundancy; assembly and deployment in orbit; micrometeoroid shielding.

Radiators and plumbing: $5–10B is a reasonable band for a 100 MW-class orbital heat-rejection system.

6. Structural, station-keeping, and support systems

- Truss structures for arrays and radiators.

- Mounting for server modules (pressurized or not).

- Attitude control, reaction wheels, thrusters, propellant.

- Communications, docking, and robotic maintenance.

Historic analog: ISS total program cost ≈$100–150B. Your space data center can drop life support but must massively scale power and cooling.

Structural modules, propulsion, robotics, docking, comms: $10–20B as a placeholder.

7. Extra mass and extra launches

Beyond the 900,000 kg of servers:

- Arrays at ~20 kg/kW and 80,000 kW: $$80{,}000 \times 20 = 1{,}600{,}000 \text{ kg}$$

- Radiators and truss: easily another million-plus kilograms.

Non-server payload mass (arrays + radiators + structure + batteries + modules): 3–5 million kg.

Launches for infrastructure:

$$\frac{3{,}000{,}000 \text{ to } 5{,}000{,}000 \text{ kg}}{63{,}800 \text{ kg/launch}} \approx 47\text{–}78 \text{ launches}$$- ≈50–80 Falcon Heavy launches for infrastructure.

- Add ≈15 for servers → ≈65–95 launches total.

Launch cost at $100–150M each:

- Low side (65 × $100M) = $6.5B.

- High side (95 × $150M) = $14.25B.

Launch services: $7–15B all-in.

8. Aggregate orbital data center cost

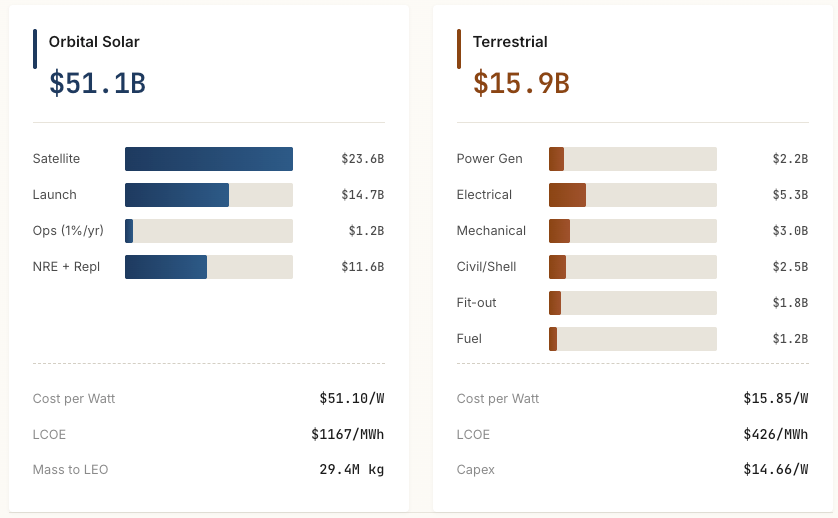

- Compute hardware (10k servers): $4–6B.

- Launch (servers + infra): $7–15B.

- Solar arrays and power electronics: $6–8B.

- Batteries and energy storage: $0.2–1B.

- Radiators and thermal systems: $5–10B.

- Structure, station-keeping, robotics, integration: $10–20B.

Total:

- Lower bound fantasy: ~$30B if every assumption breaks your way.

- More honest band: $40–80B for a single 10,000-server, 80 MW orbital AI data center.

9. Earth comparison — same compute, fewer rockets

- 10,000 servers: still $4–6B.

- Terrestrial AI data center build cost: ~$10–20M per MW of IT for cutting-edge liquid-cooled facilities.

- For 80 MW IT: $800M–$1.6B for building, power, and cooling plant.

- Total facility + infra: $1–2B.

So:

- Earth: $5–8B all-in for roughly the same compute footprint.

- Orbit: $40–80B for a fragile version that's harder to maintain and impossible to casually expand.

The same 10,000 H200 servers cost maybe 1–2× their hardware price on Earth once you include a modern AI facility. In space, the scaffolding to power, cool, and keep them from drifting into useless metal confetti multiplies your total by roughly 7–15×.

But don't take my word for it. Really.

So I wrote all that and then I found Andrew McCalip already said it first, did it better. Light years better. His Economics of Orbital vs Terrestrial Data Centers calculator is a marvelous, interactive rathole. Read it. Then buy SpaceX stock. Go ahead, I dare you.

But let's say you’ve spent forty, fifty, maybe eighty billion dollars building your cathedral of GPUs in orbit. Hundreds of megawatts of solar wings, radiators the size of small countries, trusses, robotics, carefully choreographed launches. A sky‑borne monument to capital and cleverness.

And all of it is just…up there.

In plain sight. On predictable orbits you can look up with a telescope and plot with a spreadsheet. No moat, no fence, no armed guards walking the perimeter. Just Newton, silently advancing your tumble through the sky.

On Earth, a data center has to contend with boring catastrophes. You know, the floods, fires, human error, the occasional backhoe driver with anger issues. You harden against those. You buy insurance. Redundancy lives in another region, another continent. The threats are messy, but local.

In orbit, the worst‑case failure mode is clean and elegant: one object, moving very fast, in exactly the wrong place.

You don’t need a rival superpower with a Bond‑villain laser. You don’t need a secret space plane or black‑budget kill vehicle. You just need something dumb, dense, and on collision course. A rock‑sized lump of metal with no future and bad intentions.

Kinetic energy in orbit is obscene. At several kilometers per second, a chunk of scrap the size of a suitcase hits like a small bomb. The relative velocity turns bolts into plasma and aluminum into sandblasting. Your multi‑billion‑dollar heat lamp explodes into a cloud of weaponized confetti.

Best case, you lose arrays and radiators and half your power budget in under a second. Worst case, you don’t just lose the station, you get promoted to debris source. Every panel, bracket, and server chassis becomes a new threat, fanning out on intersecting orbits. You have a public‑domain minefield.

And you don’t even need a nation‑state to do it.

We are engineering toward a world where serious amateur rocketry, billionaires with private launch toys, and small states with grudges can all throw hardware into low Earth orbit. Guidance and tracking are off‑the‑shelf. Open‑source tools will happily tell you when your enemy’s billion‑dollar compute stack is in the right patch of sky.

For a hostile government, your orbital AI cluster is the perfect target:

- It’s unique and high‑value.

- It’s not ambiguous like a city or a hospital.

- It’s centralized, fragile, and internationally obvious.

- No need to hack the model.

- No need to breach the air‑gapped training network.

- Just throw metal at it and let orbital mechanics do the rest.

On Earth, resilience is geographic. Lose a building, fail over to another. Lose a region, reroute to a different continent. The network routes around damage, data gets backed up, rebuilt, retrained. A physical attack is ugly, but it’s constrained by borders, defenses, politics, and gravity.

In orbit, your “region” is a single highly optimized, grotesquely expensive object. There is no graceful degradation. You get a 10‑kilometer cloud of junk with your logo on it.

And this is also the deal‑breaker, because the more you optimize the economics of an orbital data center, the worse its security story gets.

To make the numbers look even remotely sane, you centralize with one big power plant, one big cluster, one big structure to amortize your launches and solar and radiators. But centralization is exactly what a kinetic threat wants. You’ve concentrated value so efficiently that impact is now a strategy.

The whole pitch for orbital compute is supposed to be we’re smarter than gravity now, we’ve unlocked a new domain. But anyone with enough money, malice, and a missile can erase the whole installation in one pass.

You could spend decades perfecting fault‑tolerant power systems, triple‑redundant coolant loops, radiation‑hardened electronics, autonomous repair robots. You can out‑engineer the vacuum, the sunlight, the darkness.

But there is no amount of clever engineering that turns “one rock, wrong orbit, wrong time” into a survivable event.

On Earth, a data center is infrastructure. In orbit, it’s a target.

And that’s the coup de grâce for orbital data centers. When you’re finally done building your shimmering monument to ego, the cheapest, dumbest weapon in the arsenal can still turn it into dust in a single, silent flash. Who's going to underwrite that?

So the answer to AI's power problem isn't up. It's down. Way down. Beneath your feet, where the Earth has been running a fusion reactor's hand-me-down for four and a half billion years and never once sent anyone an invoice.

Geothermal.

Not the sexy kind of energy. Nobody's putting a geothermal vent on the cover of Wired. It doesn't photograph well. It has no contrail. Elon will never stand in front of one wearing a leather jacket.

But the Earth's core is roughly the temperature of the surface of the sun, and it has been that way since before anything with a spine existed. It is not going to stop. It does not care about your quarterly earnings call. It does not have an orbital night.

You drill down. You pump water into hot rock. Steam comes up. Steam turns turbines. Turbines make electricity. Electricity feeds GPUs. GPUs argue about whether a hot dog is a sandwich. The circle of life.

The engineering is hard. The drilling is expensive. The rocks don't always cooperate and sometimes the geology tells you to go to hell, which, coincidentally, is where the heat is. But "hard" and "impossible" are different words, and one of them involves reinventing the International Space Station so your chatbot can hallucinate from low Earth orbit.

You want to power the next 100 years of AI? Don't look up. Start drilling.